Redefining “Fit” with Equity in Mind

Designing evaluation systems that reduce bias without reducing signal

Role: Product Designer

Scope: Research · Systems design · Prototyping

Duration: 4 weeks - Team Project, August 2025

Platform: Web UX

Team: Class project

Problem

Hiring teams increasingly rely on AI-driven systems to screen candidates, but most tools operate as black boxes. Recruiters and HR leaders lack visibility into how algorithms score candidates, how bias is introduced, and how to intervene without breaking efficiency or trust.

“Culture fit” is often used as a shorthand for evaluating people, but in practice it introduces bias into decision-making.

Across hiring and evaluation systems, “fit” is frequently:

Loosely defined

Subjectively applied

Inconsistently measured

This creates outcomes where:

Decisions feel justified but are hard to audit

Bias hides behind intuition

Equity goals conflict with perceived quality signals

Challenge: How might we redesign evaluation systems so they remain effective while reducing bias and subjectivity?

Why this matters

When hiring tools are opaque, teams default to gut decisions or over-trust automation. This reinforces bias, reduces confidence in outcomes, and turns “fair hiring” into a checkbox rather than an operational practice.

Reframed insight

We initially explored hiring from the job seeker’s perspective, interviewing applicants navigating AI-driven systems. These conversations revealed frustration, opacity, and emotional fatigue, but also surfaced a critical shift:

Ethical hiring must start with the companies designing and operating these systems.

We pivoted the product focus to HR teams and talent leaders, grounding the solution in how real hiring decisions are made under pressure.

Design principles

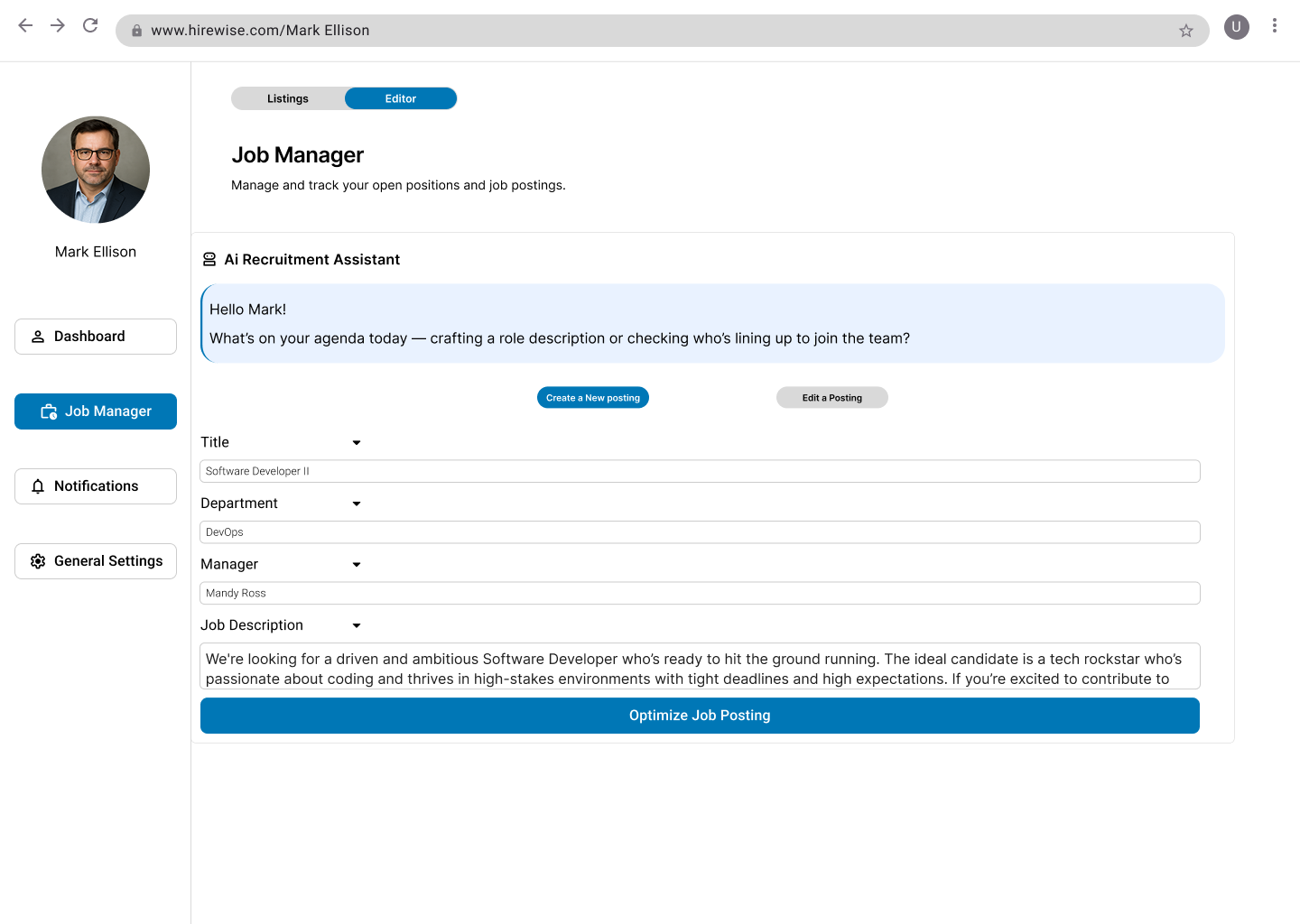

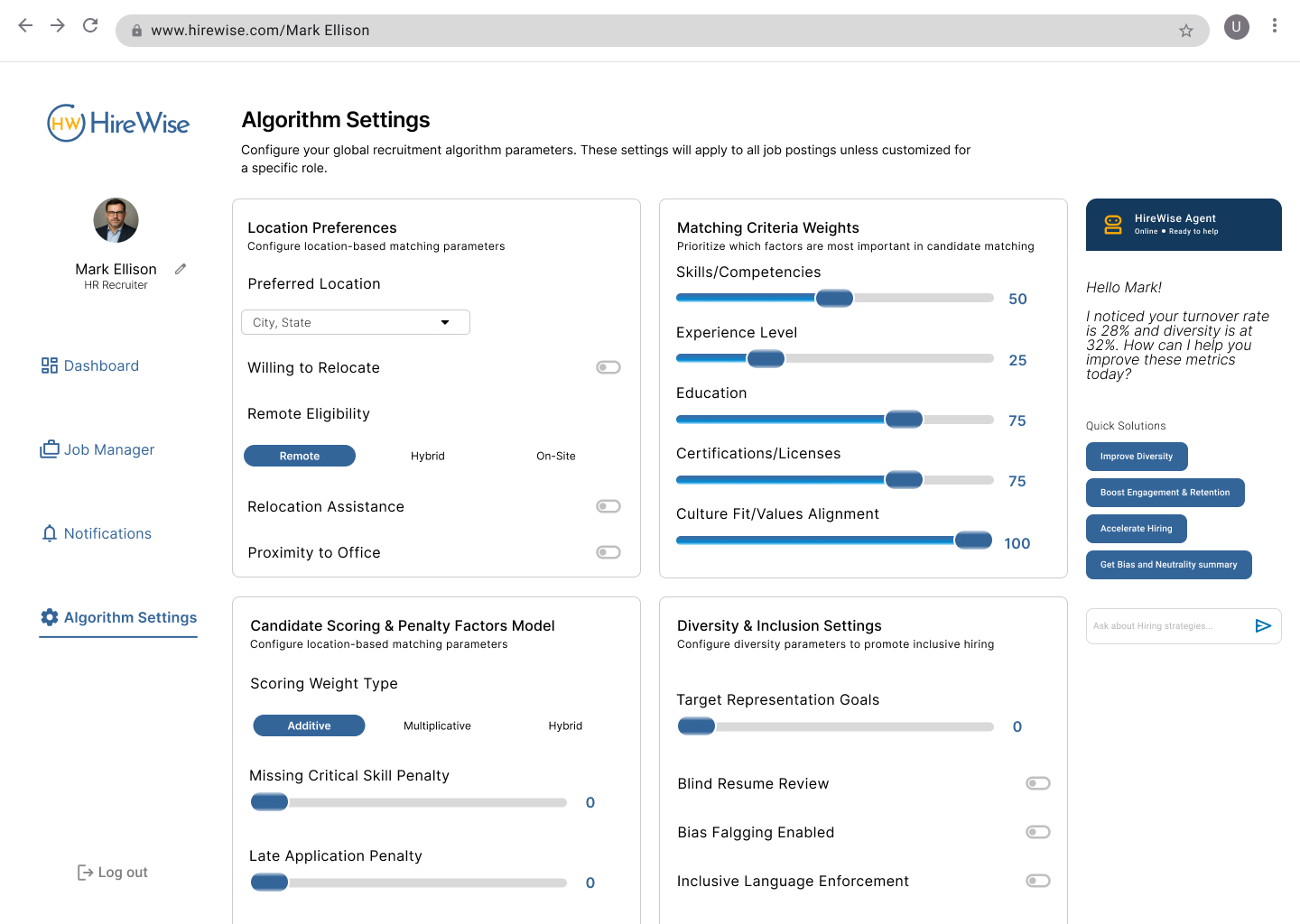

We designed multiple concepts, and move forward with an AI assistant prototype that provided inclusive language prompts, equity scoring and bias aware job description suggestions, to help guide recruiters.

Based on the findings, we defined three guiding principles:

Make evaluation criteria explicit: Replace vague judgments with observable signals.

Separate values from performance signals: Prevent cultural alignment from overriding capability.

Design for auditability: Enable reflection and accountability in decision-making.

Solution

We designed a structured evaluation framework that reframes “fit” as evidence-based alignment rather than intuition.

The core experience supports evaluators in:

Assessing candidates against explicit criteria

Documenting evidence for each judgment

Reflecting on decisions before final selection

Key design decisions included:

Replacing binary fit judgments with clearly defined dimensions

Anchoring evaluation to behaviors and outcomes, not personality

Making tradeoffs visible, so decisions could be examined and discussed

The system was designed to preserve hiring signal while reducing subjective bias.

Research and testing

We explored how people interpret and apply “fit” across hiring and evaluation contexts through interviews and secondary research. One core insight emerged:

Bias often enters not through intent, but through unstructured judgment.

Three patterns consistently surfaced:

Ambiguous criteria amplify bias: When expectations aren’t explicit, evaluators default to familiarity.

Gut feel is treated as signal: Intuition fills gaps left by poorly defined frameworks.

Equity fails at the system level, not the individual level: Well-intentioned people still produce biased outcomes within vague systems.

Bias in hiring is not primarily a job seeker problem, it’s a systems problem. If we want equitable outcomes, HR teams need control, transparency, and guidance, not just automation. This reframed the problem from mindset to mechanism design.

We interviewed 5 HR and business professionals (Directors of HR, Talent Leads, Consultants), and observed their frustrations were centered on bias patterns and lack of feedback. and made iterations based on feedback:

Simplified crowded AI suggestions.

Grouped quick actions for clarity.

Added tooltips and clearer hierarchy.

Improved accessibility through spacing, and control states.

Outcomes

This work validated a key hypothesis:

Bias decreases when judgment is structured, not eliminated.

Early feedback indicated:

Clearer alignment between values and decisions

More productive discussions during evaluation

Increased confidence in outcomes

Participants found the interface easy to use and appreciated the ethical focus.

AI helper was described as “helpful and neat” for crafting inclusive language.

Users liked fairness reminders but asked for more control and transparency.

Prototype showed how equity can be part of the workflow, not an afterthought.

What we learned

Clarity beats complexity: simple, well, organized AI suggestions built trust.

Transparency is critical: Recruiters wanted to understand how equity scores were generated.

Iteration matters: Small changes in wording and layout improved confidence in the design and made it feel more professional.

What we would improve next

If developed further:

Strengthen accessibility with WCAG standards.

Increase algorithm transparency through clearer settings.

Measure impact with metrics such as recruiter confidence, time saved per posting, and diversity of hires.

Test the framework in live hiring contexts

Measure consistency across evaluators

Explore adaptation beyond hiring (performance reviews, promotions)

Impact

This project reinforced that equity is a design constraint, not a separate initiative and reframed AI in hiring from a screening mechanism into a transparent, bias-aware decision partner.

The system empowers HR teams to move faster without surrendering ethics, and to operationalize fairness rather than treating it as a downstream outcome.